Staffing and Procurement for Fast Flow

Last year I was doing an architecture review for a mid-sized German company. Good people, solid engineering culture, real investment in modernization. They had adopted Team Topologies, restructured around streams of value, they even had a lot of collaboration between engineers and domain experts. On paper, everything looked right.

Then I asked who owned a certain processing service.

Silence. Then the engineering lead said: "Technically, the vendor does. The contract was signed eighteen months before we did any of this."

It turned out their most strategically important domain, the thing that differentiated their product, was being maintained by an offshore team on a fixed-price contract with quarterly change requests. The team topology diagram showed a stream-aligned team. The org chart showed a vendor relationship. The vendor won.

I hear versions of this constantly. "Every feature takes three times as long as it should because the vendor needs a change request for anything outside the original spec." Or: "We have a great architecture on paper but the problem is the vendor relationship." Or, the one that makes me wince every time: "The outsourcing contract was signed before we knew what we were building."

Architecture strategy and procurement strategy are made by different people, at different times, with different incentives, and almost never in conversation with each other. The result is a systematic destruction of the conditions that fast flow requires.

This post is about that problem, about a framework of lenses and heuristics for resolving it, and about what artificial intelligence adds to and removes from the decision in 2026.

Why Team Topologies Is Not Enough

Many readers will be familiar with Team Topologies, Matthew Skelton and Manuel Pais's influential idea for organising software teams around flow. If you work in software architecture or engineering leadership, it has almost certainly shaped how you think about team structures.

Team Topologies gives us the stream-aligned team, the platform team, the enabling team, and the complicated subsystem team. It gives us interaction modes: collaboration, X-as-a-Service, facilitation. It gives us cognitive load as the key constraint on team design. It is a powerful and well-grounded framework.

What it does not do is explicitly address staffing and procurement. It assumes teams exist and describes how to structure and connect them. The question of who is in those teams (internal engineers, contractors, outsourced providers) and who makes that decision, and by what criteria, is largely outside its scope.

This matters because in practice the team topology is often determined by the sourcing decision, not the other way around. A stream-aligned team built from a fixed-price outsourcing contract is structurally different from a stream-aligned team of permanent internal engineers, even if both appear identical on the topology diagram. The interaction mode that is theoretically possible between them is different. The cognitive load they can sustain is different. The depth of domain knowledge they can accumulate is different.

Team Topologies describes the shape you want to arrive at. The framework I describe here helps you make the sourcing decisions that determine whether you can actually get there, and stay there.

The Paradox

Architecture, done well, makes specific demands of the organisational structure around it. It needs domain ownership by stable, long-term teams. It needs internal expertise in areas that differentiate the product. It needs autonomous teams with deep context — teams that can make decisions without needing to escalate, coordinate, or wait.

Procurement, operating in its own lane, makes a different set of demands. It optimises for cost. It favours vendor flexibility and the ability to rotate contractors. It tends toward centralised control and standardised contracts. It values speed of engagement over depth of relationship.

Nicole Forsgren, Jez Humble, and Gene Kim put the consequence of this gap precisely in Accelerate:

“the fact that software delivery performance matters provides a strong argument against outsourcing the development of software that is strategic to your business.”

The data does not just suggest this. It shows it clearly.

And yet the outsourcing of strategic software continues everywhere, because the people making the sourcing decision are not the people who understand which software is strategic and which is not. That gap between what architecture needs and what procurement does is where fast flow goes to die.

What does that look like concretely? It looks like a team that cannot deploy independently because a vendor controls a dependency. It looks like loss of domain knowledge when a contract ends and institutional memory walks out the door. It looks like security risk from external access to sensitive systems. It looks like communication friction consuming the cognitive budget that should be spent on the problem. And it looks like the best engineers leaving, because in organisations where the interesting work is always outsourced, what remains is integration glue and vendor management. I personally changed a job in my early career because “vendor management” and not engineering or architecture would have been the career path. It wasn’t my career aspiration.

Riding the Elevator

Now that the problem is visible, it is worth naming who needs to close the gap.

Gregor Hohpe, in The Software Architect Elevator, describes the architect's job as traversing the full height of the organisation: from the engine room where code is written, up to the penthouse where strategy is set, and back again. The architect who only lives in the engine room is technically excellent but strategically invisible. The architect who only visits the penthouse loses touch with delivery reality. The value comes from riding the elevator: carrying the language of delivery consequences into strategic decisions and the language of strategy into technical ones.

Staffing and procurement decisions happen in the penthouse. The people making them (procurement managers, finance committees, HR) are not trying to undermine architecture. They are optimising within their own frame of reference and very often their unique bonus system, one that does not include the architectural consequences of their decisions. The architect's job is to ride the elevator and change that.

But let's be honest about why this doesn't happen. In most organisations, procurement reports to finance. Architecture reports to engineering or the CTO. These are different budget lines, different approval chains, often different vice presidents. By the time an architect hears about a sourcing decision, the RFP is already out or worse, the contract is already signed. The architect is invited to the post-mortem, not the planning meeting. Changing this requires more than goodwill. It requires structural change in how sourcing decisions are governed.

The framework in this post is partly a vocabulary for that elevator ride. It gives architects a language for talking about sourcing that penthouse occupants can engage with, and it gives penthouse occupants a way to ask the questions that architecture needs them to ask before the contract is signed.

We have enough conceptual vocabulary from Domain-Driven Design, Cynefin and Wardley Maps to name these problems precisely and make better decisions. And in 2026, we have AI, which changes the decision space in ways that are not yet well understood.

The Framework

The approach I describe has three activities, performed in this order.

The first is Domain Exploration: identifying the meaningful areas of expertise and activity that constitute your product or organisation. This is not an architecture exercise. It is a collaborative exercise involving domain experts, UX practitioners, testers, and customer-facing staff. Methods like EventStorming and Domain Storytelling are well-suited here. The goal is not a diagram but a shared understanding of what you actually do and where the expertise lives. Three guiding questions: Is this something we do? Do we have a specific approach here? Is this an area with genuine expertise?

The second is Strategic Classification: examining each identified domain through three different lenses, each answering a different strategic question. I will come back to these lenses in detail.

The third is Decision Heuristics: mapping the classified domains to eight delivery options, with heuristics for each combination. The heuristics are not rules. They are starting points for the conversation — structured provocations that surface the right questions rather than mechanically producing the right answer.

The Three Lenses

Lens One: DDD Classifications

The Domain-Driven Design community gives us three domain types. The strategic question they answer is: Is this where we differentiate?

Core domains carry high mid-to-long-term differentiation. They are your competitive edge, the reason customers choose you over alternatives. Core domains are where you must invest most and protect most fiercely, and where organisational ownership matters most. Core domains are not stable designations. They can emerge, evolve, and occasionally become less Core over time as the market catches up.

Supporting domains enable your Core to function but are not themselves where you win in the market. They must work well, but they do not need to be best-in-class. The investment calculus is different.

Generic domains are what many organisations do the same way. There is no competitive advantage to building a better payroll system, a better identity management solution, or a better document storage layer unless you are in the business of payroll, identity, or document management. The market provides Generic domains. Your job is to consume them efficiently, not rebuild them.

The DDD-Crew's Core Domain Charts are a particularly useful tool here: a two-axis chart mapping Model Complexity against Business Differentiation, which makes the classification visible and debatable. What matters as much as the initial classification is the movement of domains over time. A domain that was Core can drift toward Supporting as expertise matures. A domain that felt Generic can become Core if you discover unexpected differentiation within it. The Core Domain Chart also exposes an important structural truth: Core domains live in a zone of high model complexity. They are, almost by definition, not simple — which has direct implications for how they can be sourced, implications the Cynefin lens will make precise.

Core Domain Charts by DDD-Crew: https://github.com/ddd-crew/core-domain-charts

Lens Two: Cynefin Framework

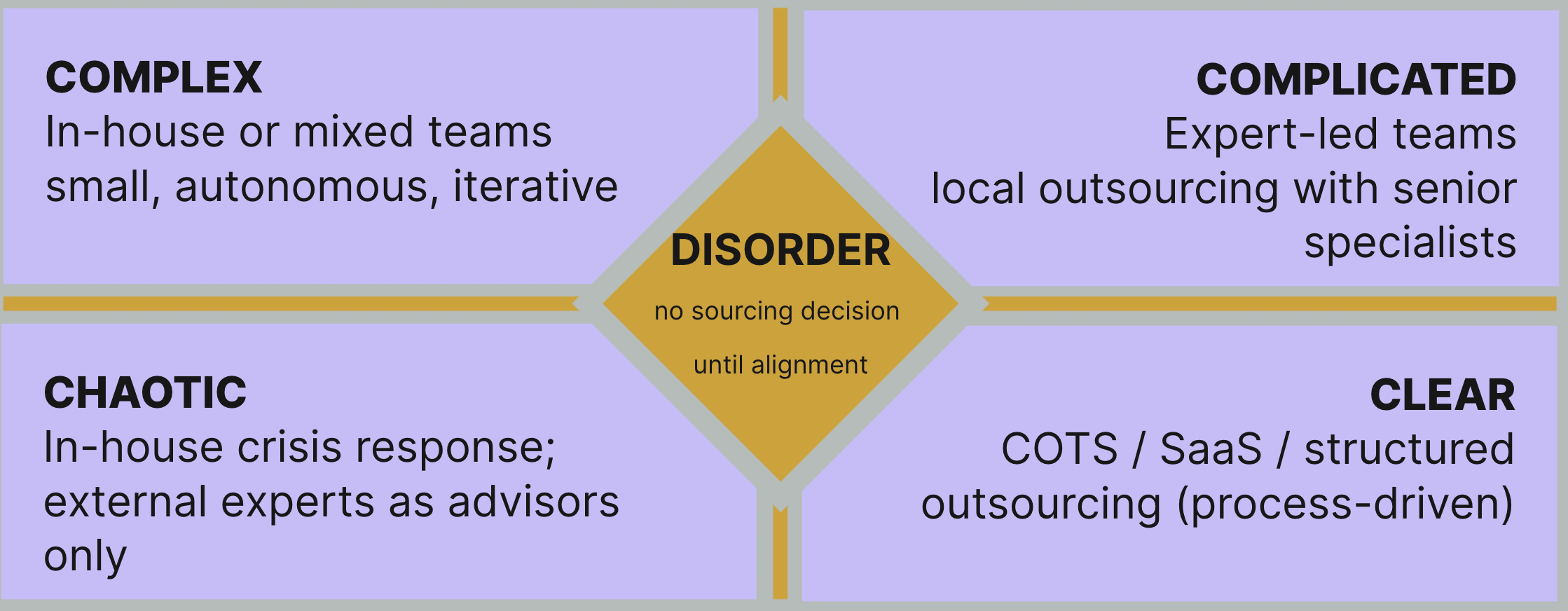

Where DDD asks about differentiation, Cynefin asks: Is this a predictable problem or an emergent one?

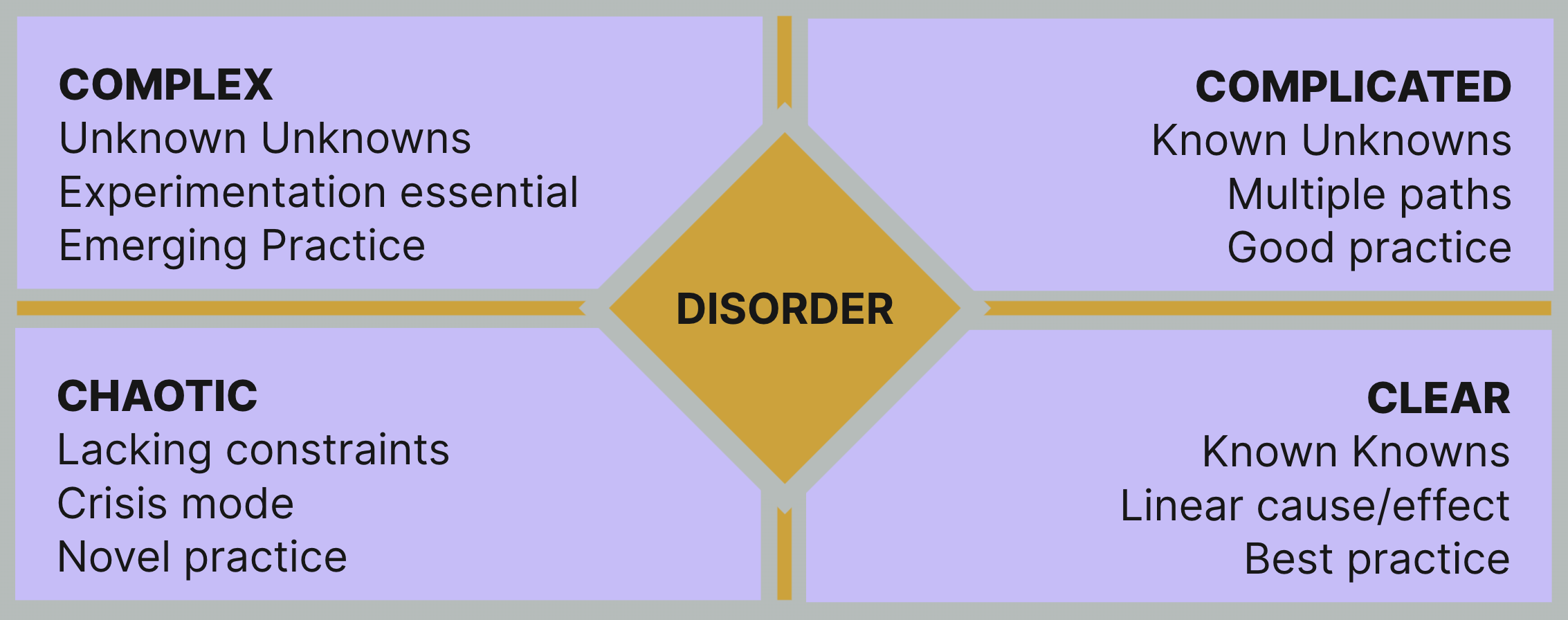

The Cynefin Framework gives us four relevant domains. Clear problems have known cause-and-effect relationships, linear paths to solution, and established best practice. Complicated problems have multiple valid paths, require expert judgement to navigate, and reward good practice. Complex problems are fundamentally different: outcomes are unpredictable, cause and effect can only be understood in retrospect, and emergent practice (probe, sense, respond) is the only viable approach. Chaotic situations require immediate action to establish constraint before any other classification becomes possible.

For sourcing decisions, Cynefin constrains what kind of relationship is possible. A Complex domain cannot be managed through upfront specification. Any sourcing model that assumes you can write a detailed statement of work, hand it to a vendor, and receive the right software at the end is not a sourcing model — it is an optimism exercise. The more unpredictable the domain, the closer the team must be to the organisation. Not as a preference. As a structural requirement for learning.

Cynefin Framework

Lens Three: Wardley Maps

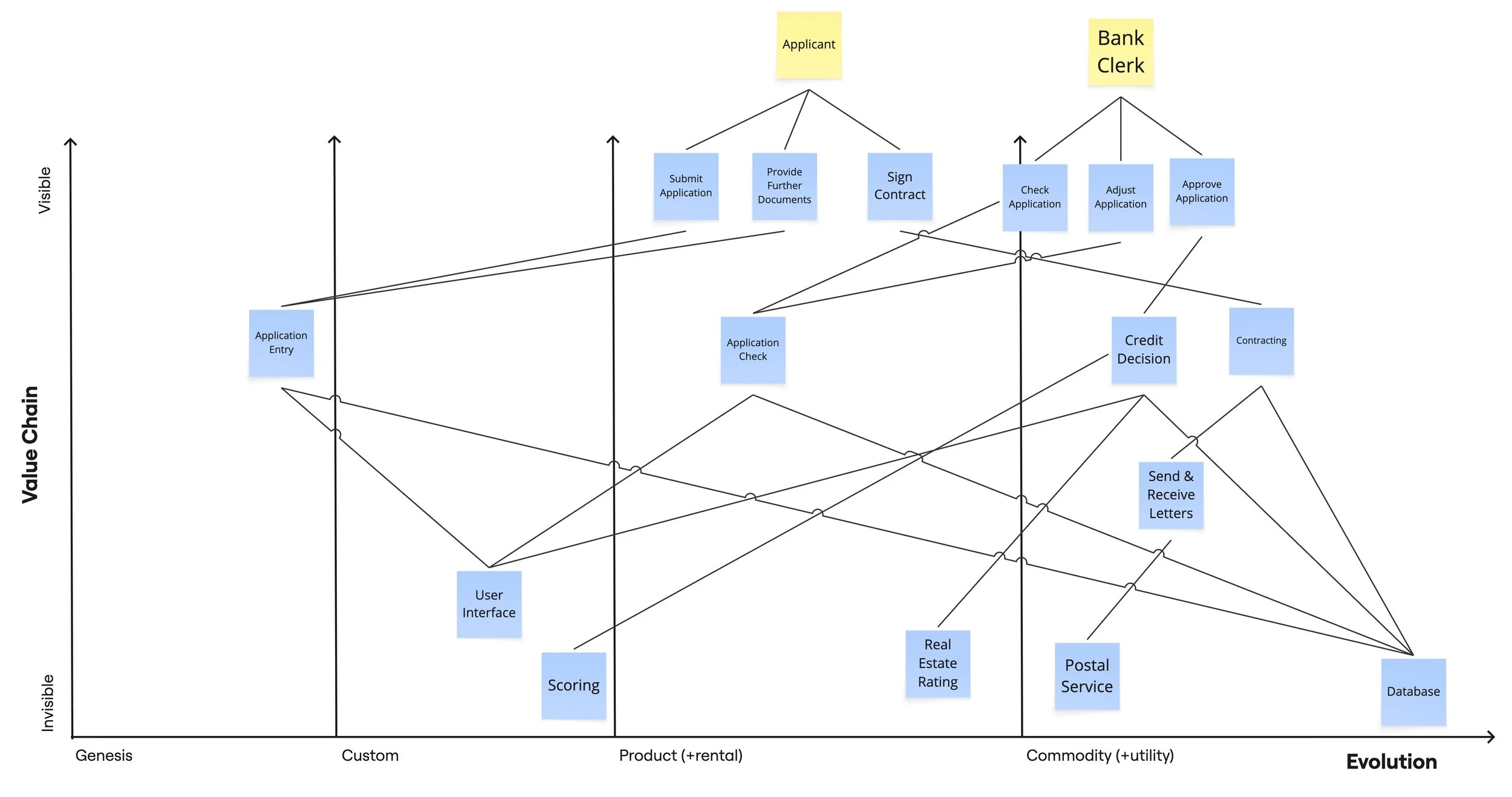

Wardley Maps answer a third question: *Does the market provide this at a given visibility level?*

The evolution axis of a Wardley Map runs from Genesis through Custom Build, through Product/Rental, to Commodity/Utility. Genesis components are novel, uncertain, and constantly changing. By definition, no one else has built them either. Custom Build components are growing and becoming more defined but remain scarce. Product/Rental components are stable, well-defined, and widely available. Commodity components are ubiquitous, standardised, and utility-like.

The visibility axis — how visible the component is in your value chain — adds an important dimension for sourcing. A high-visibility component at Product stage might still require internal ownership of judgement even if its execution can be outsourced, because its prominence in the customer experience makes its quality directly attributable to your organisation.

Simon Wardley's insight is that evolution is directional and inevitable. What is Genesis today is Commodity eventually. The strategic question is not just where a component is now, but where it is going — and whether your sourcing model matches both its current state and its trajectory.

Wardley Map

The Three Lenses, One Picture

Each lens answers a different question. DDD asks where to invest. Cynefin asks how to think. Wardley asks what the market provides. Together they give you a complete frame for the sourcing decision, constraining it from three directions simultaneously.

The Eight Delivery Options and the Distance Problem

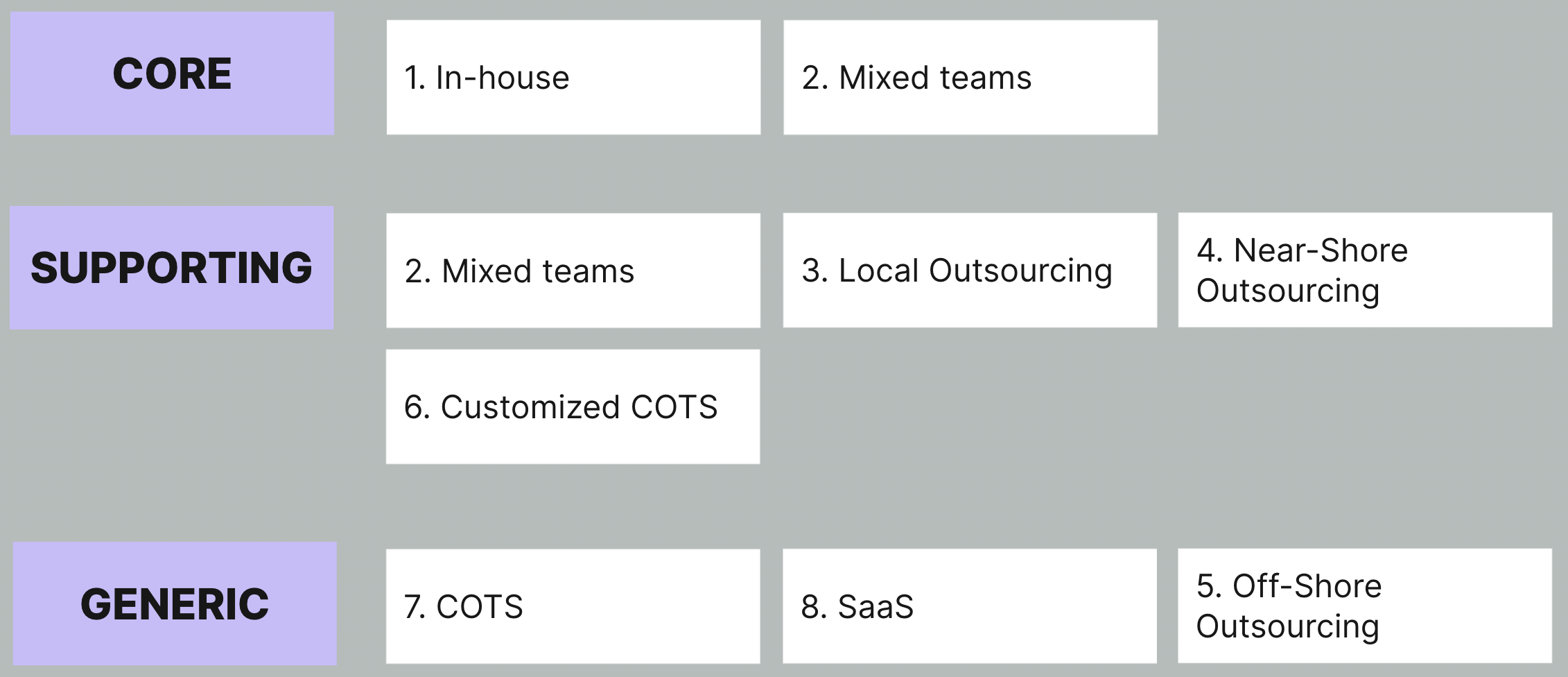

Before applying the lenses, we need to be precise about what we are choosing between. I use eight options, arranged along a spectrum from maximum internal ownership to maximum market dependence:

In-house development and ownership: your team, your codebase, your expertise

Mixed teams: internal engineers working alongside local service providers, with internal leadership

Local outsourced development: external team, geographic proximity, regular interaction possible

Near-shore outsourced development: external team in a nearby timezone, limited face-time

Off-shore outsourced development: external team in a distant timezone, significant coordination overhead

COTS with customisation: commercial off-the-shelf software, deliberately and selectively configured for your needs

COTS with minimal customisation: commercial software adopted largely as-is, with a deliberate commitment to refuse further customisation

Software-as-a-Service (SaaS): consumed, not owned; vendor controls the roadmap

The first five options involve a team writing code for business logic. Options 6 through 8 do not, or should not, which is a point worth examining carefully.

The Rising Cost of Distance

Options 1 through 5 are often discussed as if they differ only in cost. They don't. They differ in distance, and distance is the hidden variable that determines whether the sourcing model can support the kind of work being done.

Move from in-house to mixed teams and you introduce a new relationship. There is a contractual boundary, a different employment structure, a different set of incentives. Geographic distance is low: the external people are in the same building, probably the same city. But geography is often the least relevant dimension. What mixed teams introduce almost immediately is a clash of *company cultures*. Internal employees work within one kind of organisation, with its approval processes, tooling policies, and pace of change. External team members come from a different world, typically a delivery firm or consultancy with far fewer constraints and a very different culture around how decisions get made and what tools are acceptable. I have seen mixed teams where the biggest friction was not about code or architecture, but about whether a developer was allowed to install a particular IDE plugin. Both groups are in the same room, yet the friction is real: disagreements about tooling, working practices, and what counts as "moving fast" surface quickly and, left unaddressed, corrode the collaborative spirit that makes mixed teams worth choosing in the first place.

Move to local outsourcing and the distance increases. Communication is more mediated. There is more formality in how work is defined and reviewed. The cultural context is still largely shared: same country, same industry conventions, probably similar professional norms.

Move to near-shore and cultural distance begins to accumulate alongside geographic distance. Language may differ. Working day rhythms may diverge. Professional norms around communication, hierarchy, and decision-making may be different enough to require explicit negotiation.

Move to off-shore and all of these differences compound. Timezone gaps mean real-time collaboration is limited to a narrow window each day, or requires some team members to work outside normal hours. Country-level legal differences add compliance complexity. Regional cultural differences in how disagreement is expressed, how specifications are interpreted, and how problems are escalated can create invisible friction that neither side fully recognises or knows how to name.

The consequence is direct: the greater the distance, the more explicit and complete the specification must be before work begins. A mixed team can operate with a rough brief and a conversation. A near-shore team needs more structure. An off-shore team, to function well, typically needs detailed specifications, clear acceptance criteria, and explicit change management processes.

Distance demands specification. Complexity forbids it. When both are present in the same sourcing decision, something must give — and what usually gives is either the quality of the specification (the work fails) or the speed and adaptability of the delivery (flow dies). I will return to this tension when discussing the Cynefin heuristics.

And to be clear: this is not a criticism of off-shore teams. Some of the strongest engineers I have worked with were in Bangalore and Kraków. The constraint is not competence: it is the structural overhead the working relationship demands. Fast-moving, experimental, strategically important work with emergent requirements does not fit the specification-dependent relationships that distance creates.

The Critical Distinction Between Options COTS+Customization, COTS, and SaaS

The distinction between the three market consumption options deserves more care than it usually receives.

COTS with Customisation (option 6) means adopting a commercial product and deliberately investing in configuring, extending, or adapting it to your specific needs. The key word is deliberately. The risk is well-known: customisation creates a proprietary surface that must be maintained through every vendor update, accumulates technical debt, and eventually becomes more costly than a full rebuild. Option 6 is viable, but it requires discipline about scope and a clear view of what the customisation is actually achieving.

COTS with Minimal Customisation (option 7) makes a different and stronger commitment: we adopt this product and we say no to customisation, even when the product would technically permit it. This distinction matters enormously in practice. During a recent conference discussion about Salesforce, the point came up sharply. Salesforce is a SaaS product, but it is extraordinarily customisable: custom objects, custom logic, custom workflows, Apex code, entire applications built on its platform. Organisations routinely build systems of significant complexity on top of Salesforce and call the result "just using Salesforce."

That is not option 7. That is option 6, wearing a SaaS label.

Option 7 means resisting the temptation to customise even when the capability exists. It means accepting the product's opinions about how work should flow, rather than bending the product to match your existing processes. Every customisation is a future maintenance liability and a future upgrade complication. For a non-differentiating domain, accepting that liability makes no strategic sense.

SaaS (option 8) makes the commitment explicit at a structural level. You do not own the software. You do not run it. You subscribe to it. The vendor controls the roadmap, the infrastructure, the upgrade cycle. Your integration surface is an API. For Generic domains at Commodity stage, this is the correct answer

The shared principle across options 6, 7, and 8 is a progressive reduction in what your organisation takes responsibility for. The question to ask at each step is not "how much does this cost?" but "how much of this can we safely not own?" For non-differentiating domains, the answer is usually: more than you currently do.

The Heuristics

Let’s first of all look into heuristics which you can directly apply when you only use one of the three lenses right away. After that I will introduce a set of heuristics which bring the three lenses together.

DDD Lens Heuristics

Two rules anchor the DDD lens.

Rule one: Never give full ownership of a Core domain to an external provider. You can use mixed teams for Core domains, particularly in modernisation contexts where you are rebuilding internal capability. But full ownership — where the vendor controls the codebase, the architecture decisions, and the deployment — is incompatible with a domain that defines your competitive edge. When that vendor relationship ends, the ownership ends with it.

Rule two: In modernisation, use mixed teams to rebuild internal capability in Core domains. Mixed teams are not a permanent state. They are a transitional mechanism. The goal is internal ownership restored, using the external presence to transfer knowledge in, not to extract knowledge out.

DDD Heuristics

Cynefin Lens Heuristics

Three rules govern the Cynefin lens. All three connect back to the distance argument above.

Rule one: Complexity always constrains your outsourcing ceiling. The ceiling for how far you can outsource is set by the Cynefin classification, not by cost optimisation. A Complex domain caps at in-house or mixed teams. A Complicated domain can extend to local or near-shore outsourcing with expert-led teams. A Clear domain can safely reach the full range of options.

Rule two: Any sourcing model in a Complex domain that relies on upfront specification will fail. Fixed-price contracts, detailed statements of work, milestone-based payments — these are all specification-dependent models. In a Complex domain, the specification is precisely what you cannot produce in advance. The sourcing model must be capable of operating without it.

Rule three: The more unpredictable the domain, the closer the team must be to the organisation. "Closer" means the full stack of distance I described earlier: communication distance, cultural distance, legal distance, specification formality. Complex domains require a team that can operate in ambiguity, respond to emerging understanding, and change direction without the overhead of formal change management. That describes in-house and mixed teams. It does not describe near-shore or off-shore — not because those teams lack talent, but because the specification completeness that distance demands is structurally incompatible with the iterative, emergent work that Complex domains require. Sending strategically important, experimentally structured, architecturally unresolved work offshore is not a cost-saving exercise. It is a setup for failure.

Cynefin Heuristics

Wardley Lens Heuristics

There are three rules for the Wardley lens.

Rule one: Never build what the market already provides at Commodity stage. Every engineer building a commodity component is an engineer not building your Core.

Rule two: Never outsource what the market doesn't yet provide at Genesis stage. At Genesis, there is no external expertise to outsource to. The problem is too new. If this domain matters to you, you build it yourself or you don't have it.

Rule three: High visibility demands internal ownership of judgement, even when execution can be outsourced. A high-visibility component sits directly in the customer experience. Its quality reflects your organisation's quality. The execution can be delegated. The judgement cannot.

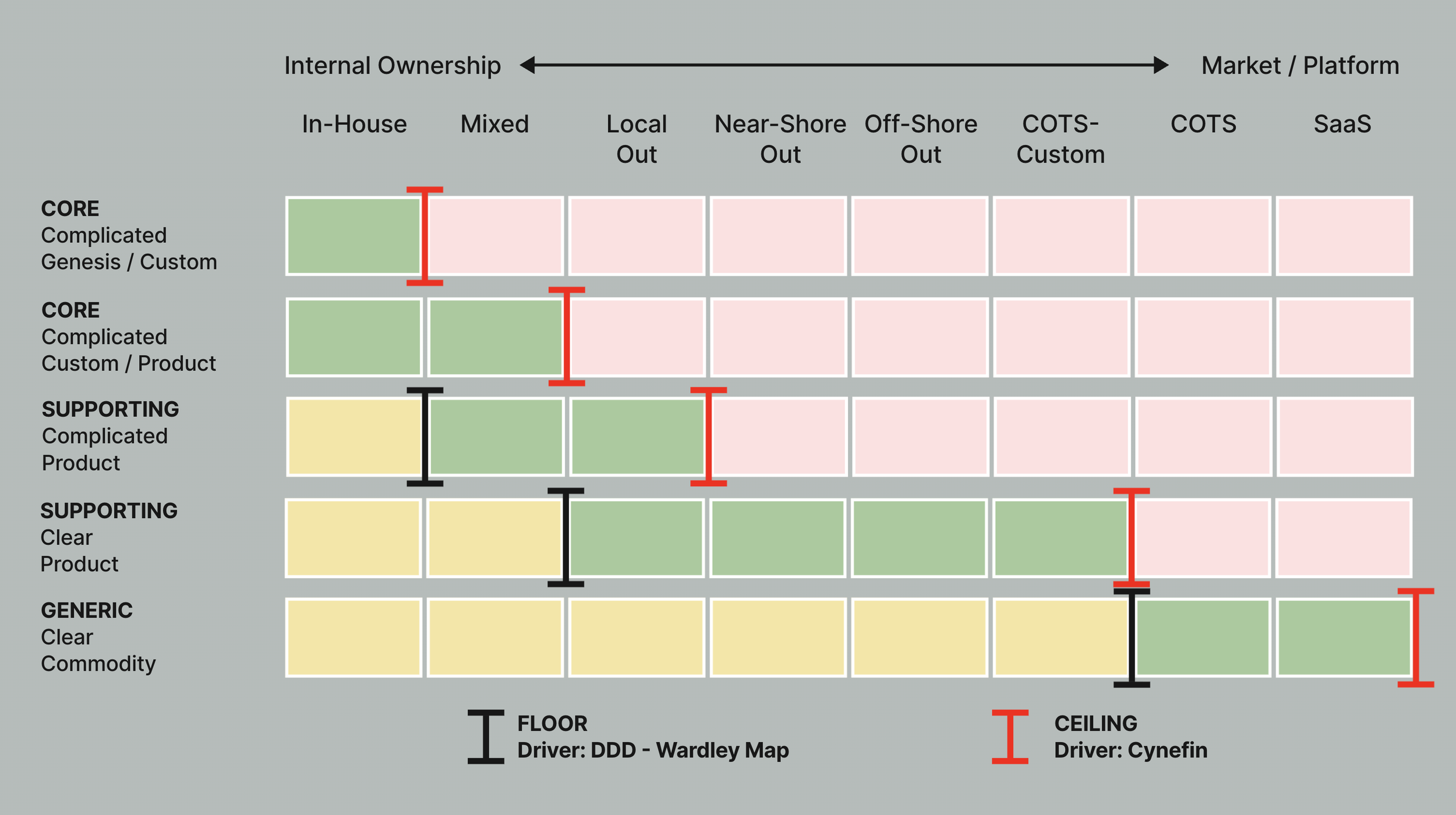

Combining the Lenses: Ceiling and Floor

When you overlay the three lenses on the eight delivery options, two forces emerge.

The Ceiling is driven by Cynefin. It marks the maximum viable point on the outsourcing axis for a given domain. As complexity increases, the ceiling drops. For a Core domain classified as Complex in Genesis — your most strategically sensitive situation — the ceiling is at option 1: in-house, full stop. The ceiling also embeds the distance argument: Complex domains need close, high-bandwidth collaboration, which constrains not just the maximum delegation but the maximum distance at which any delegation can happen.

The Floor is driven by DDD and Wardley combined. It marks the minimum viable investment level — the point below which you are over-investing relative to the domain's strategic importance and market availability. For a Generic domain at Commodity stage, the floor rises all the way to options 7 and 8.

The viable zone — the space between ceiling and floor — is where the sourcing decision actually lives.

For Generic + Clear + Commodity domains specifically, the floor rises all the way to COTS and SaaS. Not off-shore outsourcing, not COTS with customisation. Pure consumption. The argument here is not just about cost but about coordination overhead. Outsourcing still creates a team relationship that must be managed. Only COTS at option 7, with a genuine commitment to minimal customisation, and SaaS at option 8, eliminate that overhead entirely.

Saying no to customisation is where this gets hard in practice. Product teams will always find reasons why their situation requires a special configuration. This is precisely where self-discipline becomes the critical ingredient. I sometimes say that agility requires more self-discipline than any waterfall project, because agile ways of working do not impose the guardrails that a rigid methodology does. The same applies here. Resisting the urge to adjust a Generic, Clear, Commodity product to your own preferences — when the capability to do so is sitting right there in the admin panel — is an act of deliberate restraint. The Generic + Clear + Commodity classification is the answer to the pressure: this domain is not where we differentiate. The cognitive load of owning and maintaining the customisation is a tax on the teams that should be building Core. We say no, not because the product prevents us, but because our strategic classification — and our self-discipline — tells us to.

The three lenses combined.

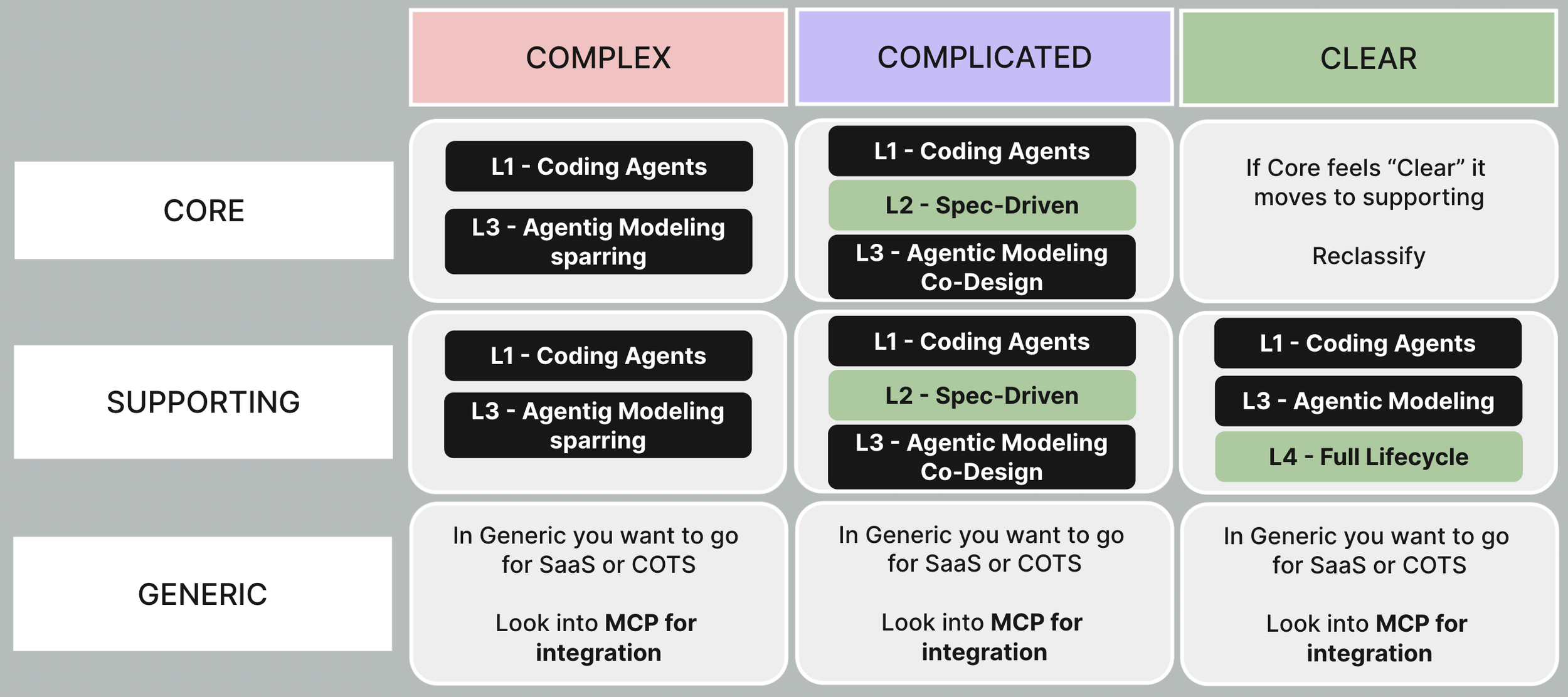

Adding AI: A Different Decision

In 2026, every engineering organisation needs a sourcing policy for AI delegation and not just a tooling policy. The question is not "do we use AI?" It is "how deeply do we delegate to AI, in which domains, and what governs that decision?"

I propose four levels of AI involvement depth:

Level 1: Coding Agents. AI accelerates execution. GitHub Copilot, Cursor, Claude Code. The human makes all meaningful decisions. AI writes, suggests, and completes code. This is tooling, not delegation.

Level 2: Spec-Driven Agents. The human writes a detailed specification. AI implements end-to-end from that specification. The human reviews, corrects, and accepts. The cognitive work of design stays human; the work of implementation is delegated.

Level 3: Agentic Modeling. AI and human co-create the specification together. The AI is a thinking partner in the design and modeling phase. It is questioning assumptions, surfacing alternatives and proposing structures. This goes beyond execution assistance into collaborative intellectual work.

Level 4: Full Lifecycle Agentic. AI covers the full software development lifecycle, from initial analysis and planning through architecture, stories, development, and QA. BMad-style orchestration falls here. The human sets direction and reviews outcomes. AI manages the process.

Why Wardley Leaves the Room

For AI delegation decisions, the Wardley lens drops out. This surprised me at first, but the logic is clean.

Wardley's contribution to the sourcing decision is its evolution axis: does the market provide this? That question was answered when you decided on options 1 through 8. If you are asking about AI delegation, you have already decided to have a team writing code. Wardley has done its work and left the room.

What remains is the cognitive question: can the process of solving this problem be specified, delegated, or must it remain emergent? That is precisely what Cynefin answers. The ownership question of how much human judgement must remain engaged, and at what seniority is what DDD answers. DDD and Cynefin together are sufficient. Adding Wardley would not change the answer. Evolution describes the market, not the mind.

The AI Heuristics

Mapping the four levels to DDD × Cynefin classifications:

Core + Complex: Level 1 always — coding agents are universal tooling rather than a delegation decision. Level 3 as sparring partner: AI as intellectual challenger, exploring the problem space, pressure-testing emergent ideas. In a Complex domain, AI's role is not to produce answers but to help you ask better questions. Levels 2 and 4 are dangerous here: you cannot spec what must emerge.

Core + Complicated: Level 1 always. Level 2 recommended: the domain is specifiable, senior expertise governs the spec, AI implements. Level 3 viable as co-design support. Level 4 inappropriate: Core ownership must stay with the human.

Supporting + Complex: Same AI ceiling as Core + Complex. The Cynefin classification sets the limit regardless of DDD. Level 1 and Level 3 sparring only.

Supporting + Complicated: Level 1 always. Level 2 recommended. Level 3 viable.

Supporting + Clear: Full range viable. Evaluation of Level 4 is recommended: the domain is stable, well-understood, and fully specifiable. Full lifecycle delegation genuinely accelerates delivery without meaningful risk.

Core + Clear: This combination rarely arises as a stable state. Core domains are differentiating; Clear domains have known-good solutions. If you classify a domain as both, it is almost certainly drifting toward Supporting. Treat the combination as diagnostic rather than as a place to make permanent sourcing decisions.

Chaotic domains: No AI delegation decision at all. In Chaotic situations you are in crisis response mode — establishing constraints, stabilising the system, getting back to a state where classification is even possible. The sourcing question is irrelevant until the chaos is contained. Use internal expertise and bring in external advisors if needed, but do not delegate to AI agents in an environment where nobody yet understands what is happening.

AI Delegation Depth Heuristics

Generic Domains and MCP

Generic domains fall outside the AI delegation matrix entirely, because the AI delegation question only arises when a team is writing code. For Generic domains, the answer is already: no team.

SaaS and COTS solve the "don't build Generic" problem for humans. But there has historically remained an integration tax: someone still needs to write the glue code connecting your systems to the Generic components you consume. That integration layer created a persistent argument for keeping some engineering capacity in Generic domains.

The Model Context Protocol (MCP) is dissolving this argument. At least for organisations whose vendor ecosystem has adopted it. MCP is a standardised protocol through which AI agents can interact with external services directly. Stripe, Salesforce, GitHub, ServiceNow, SAP: where MCP servers exist, AI agents can connect without bespoke integration code. The integration layer itself is being commoditised.

I want to be careful not to overstate this. MCP adoption in enterprise contexts is still early. Many vendors are in pilot phases. But the direction is clear, and for organisations whose tool landscape already supports MCP, the complete picture for Generic domains is: SaaS/COTS plus MCP. No team. No bespoke integration. No AI delegation levels, because there is nothing to delegate.

The question for Generic domains is not "how do we build this more efficiently?" It is "why are we building this at all?" And in 2026, for a widening set of Generic domains, the answer is: we aren't. We consume and connect.

The progression across the last fifteen years is worth stating plainly:

The 2010s insight: don't build Generic, buy it.

The 2020s insight: don't outsource Core, own it.

The 2026 insight: don't even integrate Generic, connect via MCP.

Each layer raises the floor on what deserves human investment, concentrating that investment more precisely where differentiation actually lives.

What This Means in Practice

The framework described here is not a process. It is not a governance procedure or a checklist. It is a set of lenses for having better conversations — conversations that are currently not happening because the people who understand domains are not in the room when sourcing decisions are made.

Making it practical requires three organisational changes that are harder than the framework itself.

First: architecture and procurement need to be in the same room when sourcing decisions are made. Not sequentially, with the architects reviewing the contract after procurement has signed it. Simultaneously, with architecture driving the classification and procurement finding commercial structures that serve it. This is the elevator ride in practice.

Why doesn't this already happen? In my experience, three reasons. Procurement reports to finance; architecture reports to engineering. They operate on different timelines — procurement runs on contract cycles and budget years, architecture thinks in product roadmaps and capability horizons. And there is a perception problem: many procurement leaders genuinely believe sourcing is a commercial decision, not a technical one. It is both. The architect's job is to make that visible before the RFP goes out, not after the post-mortem comes back.

Second: the vocabulary needs to become shared. Core, Supporting, Generic. Complex, Complicated, Clear. Genesis, Commodity. These are not architect jargon. They are the minimum shared language for a sourcing conversation that is not flying blind. The investment in making procurement fluent in this vocabulary pays back immediately in better decisions.

Third: the heuristics need to become explicit policy, not implicit knowledge. The heuristics described above should be written down, referenced in sourcing decisions, and used to challenge proposals that violate them. Implicit knowledge lives in the heads of the architects who happen to be in the room. Explicit policy survives turnover, survives reorganisation, and can be challenged and improved over time.

The Closing Argument

The biggest bottleneck to fast flow is not your architecture. Your architecture might be excellent. The biggest bottleneck is the sourcing decisions that were made without architectural sensibility: the outsourced Core domain that is now a vendor dependency, the Generic component that has an internal team maintaining it, the Complex exploratory work that was sent offshore because it looked cheaper on a spreadsheet, the Salesforce implementation that has accumulated so much Apex code it is effectively a custom-built CRM wearing a subscription label.

These decisions compound. A vendor dependency in a Core domain does not just slow that domain. It slows every team that depends on it. A Generic component with an internal maintenance burden occupies headcount that could be working on Core. Complex strategic work sent to a distant team comes back late, wrong, or both — and the post-mortem blames the team rather than the structural incompatibility of the arrangement.

The architectural consequences of poor sourcing decisions are not local. They propagate through the entire delivery system.

Getting this right is not primarily a technical problem. It is a conversation problem, and it is an elevator problem. Let me reference Gregor Hohpe one last time: The architects need to ride the elevator. The framework needs to travel with them. And the leadership needs to be willing to hear that a cheaper contract is sometimes the most expensive decision the organisation makes.